I spent the last ~3 days troubleshooting a bug in SNES9x. This requires some backstory in re: the anticipated question, "Why are you even using SNES9x? [emulator] is much better!"

This began with a silly idea for a Twitch stream. I would get four friends together and, in the vein of Twitch Plays Pokemon, we would all control the same videogame. Unlike that event however, everyone would have direct control of Player 1, and every $rand seconds, control would switch off to a random different player.

I knew ZSNES couldn't do this, because only one netplay participant can control a given virtual gamepad input at a time. SNES9x seemed to show no such limitation however, and I tested it with a friend and it appeared to work.

To manage the "gameshow" I wrote a terrible Python script, as quickly and dirtily as possible, that simply slept for random seconds, then chose a random .WAV from a list and played it.

This is a really filthy script. It opens two audio devices - the default Windows one, and the "VB-Audio Virtual Cable". The first so I and the Twitch stream could hear, the second to be mixed with my mic audio and directed into my Discord input so my contestants would get it in near realtime. Surprisingly, this script works fine, despite it being the worst code I've written since I was a teenager.

I did the stream, and it was a total failure. Turns out I misunderstood what I was seeing when I tested; the other player was actually on gamepad 2 and players 3 and 4 were on consecutive pads. Damnit!

We tried a couple other emulators with no success, then gave up on the project and ended the stream. But I was intent on making this happen, so I got cracking on the source code.

I started with ZSNES and gave up on it really fast. Hacking ZSNES sucks on Windows.

Next I got the SNES9x source and found it readily compiled in Visual Studio. "Readily," here, means I spent four hours fighting with it until I figured out that I needed to do a CLEAN wipe and reinstall of Visual Studio and select the Windows XP and Windows 8 compatibility libraries. Right away I had a binary.

The SNES9x source is terrible. It's not readable, it's not well laid out, the source files have nonsense names - server.cpp contains server code, but client code is in netplay.cpp, and both use the function prefix NP, for netplay, so you never know where anything is. There are no comments. I've seen about five total.

Implementing the many-players-on-one-gamepad hack was fun and I'll detail it later. That was not actually in the forefront of my mind though, because something odd had happened during the stream. My poor girlfriend was excluded from the fun even before we found out the emulator wouldn't do what we wanted, because when she attempted to join, everyones emulator threw an error and disconnected.

We tried several orders of operations and found that it happened reliably when the fifth player joined, no matter who that was. And that really bugged me, so after I got the gamepad hack working (I thought), I set about trying to solve the crash.

First, I will regale you with some details to titillate your mind, especially if you aren't a network programmer. When writing your own layer 7 network protocol, the procedure is:

With, say, HTTP, the work has been done for you. If you're writing SOAP or whatever you're past layer 7. You're writing layer 7+7, you're doing everything with unlimited length strings. This is a protocol right on top of TCP however, purpose built for a low latency game application.

I've worked on a few C programs before that had their own protocols. The first problem you have to overcome is: you don't know when you're done getting input.

While Ethernet and IP have a concept of a "packet", TCP does not, and the recv() method requires you to specify how many bytes you're /expecting/, how many to pop off the buffer of incoming TCP data. But how can you know how much to recv() without having already recvd?

The answer is you have to bootstrap. You have to begin by having the server send an amount of data of an absolutely known size. So a protocol might send four bytes down the line that represent a length of data; the client, once it establishes the socket, reads four bytes, stops, decodes them, then reads the number of bytes specified by that first four bytes. Once it's read that much data, it has to look for another header of the same size in order to proceed - and it's critical that that is exactly what comes down the pipe next.

A 'bytestream' protocol like this depends on an absolute guarantee that the server will always send data in precisely the right order, never stop in the middle of some data to switch gears and send something else, and tag everything with an accurate header. This is called "framing." I am not an expert on this stuff, so I assume more sophisticated applications have methods of recovering framing if they become lost, but overall it all runs on dead reckoning.

So the initial failure mode was this: I would start the game server and connect three other clients. In server mode, the host opens a session to itself, quake style, so that's a total of four clients. This worked perfectly.

Then I would connect a fourth client (so five connections including the host) and the following would happen:

- the host window would disappear

- all four other windows would report "Bad magic number in server heart beat"

I am not a professional programmer, but I have been coding on and off since I was twelve. I have a decent amount of expertise in narrowing down a problem, and I figured this was a protocol error because it was crashing both ends. If the server had a simple local bug, like an unhandled exception causing it to crash, the clients wouldn't report that error. They would hang for an indefinite amount of time, the socket would time out, and they'd shut down the connection. Maybe crash at that point.

It's possible the server could have sent a malformed packet due to memory corruption and then exited when the corruption caught up to it shortly afterwards, but unlikely. My presumption was that the server was working "correctly", but sending data the clients couldn't understand.

The fact the server was closing was unusual - why was there no error? Well, as soon as I ran the server with a debugger attached, Visual Studio notified me of heap corruption.

Unhandled exception at 0x00902ED9 in snes9x-debug.exe: Stack cookie instrumentation code detected a stack-based buffer overrun. occurred

I stared at the server code and realized they underallocated a buffer. Easy fix there. After I applied that, the server didn't crash anymore but the clients were still throwing this "Bad magic number." So now I turned around and attached the debugger to the client. No exceptions in the client - whatever the issue was, it was being handled, otherwise I wouldn't be getting the popup. So, I started adding breakpoints and single-stepping.

I was stunned at how patient the process was. The server just let my client sit, not responding to TCP, for minutes while I single-stepped through the code. It was actually really cool to debug like that. And I found my problem in the servers protocol code.

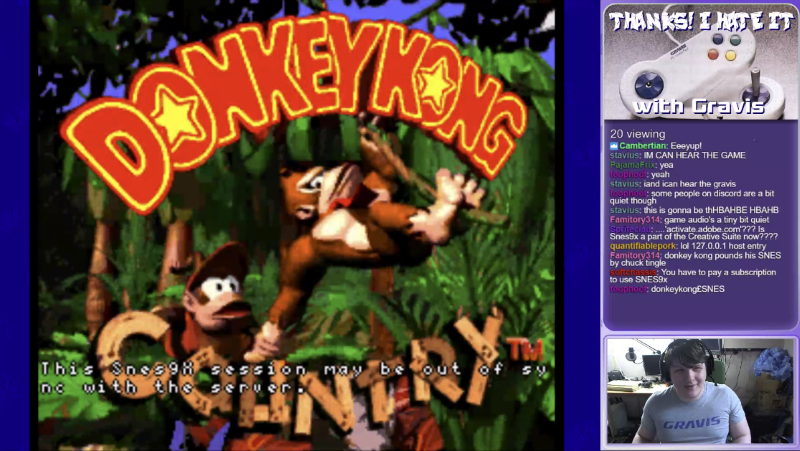

Mind you, there is NO protocol documentation, so I had to reverse engineer it. This protocol is custom so Wireshark wasn't a ton of help - just generic TCP packets with "Data". I set a breakpoint in the "receive data" routine and then stepped through as each packet came in

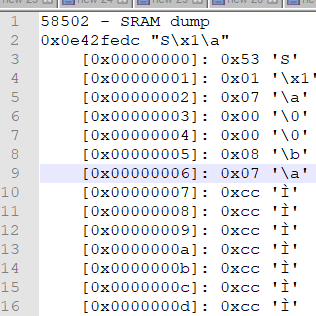

Each time a packet was received I copied the buffer contents to a text file, then stepped through the client code to see what code paths it followed for that packet, and labeled each one in turn until I understood the connect and sync procedure.

After the TCP handshake, the initial conversation goes:

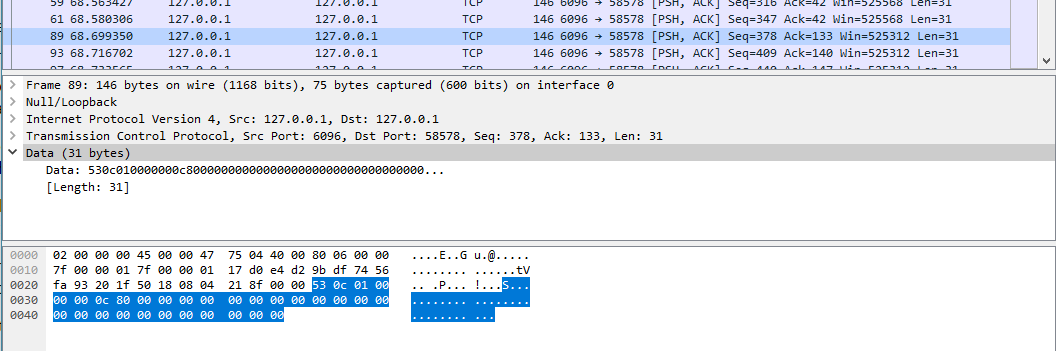

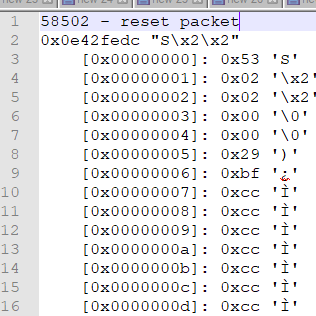

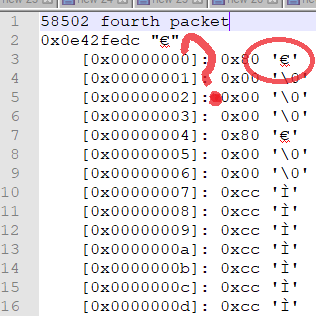

Each packet begins with a header structure consisting of a "magic number", 0x53 or "S", then an 'opcode' telling the client what to do, and the rest is data unique to that opcode. As you can see above, the first three packets begin with 0x53, but the fourth - which causes the error - begins with 0x80.

I should note, these are dumps of the raw output of the recv() routine. This is what the client put in the buffer the first, second, third and fourth times it tried to read one complete server instruction from the TCP stream. This is not what was sent on the wire - matching these up with the packets in Wireshark was really tough, so I gave up on it and just stared at the dumps for a bit.

I learned elsewhere in the source that the status of each joypad is packed in 4 bytes with, I believe, each bit representing the state of up, down, left, right, A B X Y L R, select, start and I think a little more for mouse, light gun etc. So the joypad update packet length is 4 bytes for each joypad in use. NP_MAX_CLIENTS, the constant set in the source representing the max players supported by snes9x, is 8, so the length of data for all eight possible joypads is 4 * 8 bytes.

After banging my head against this for eight hours, I finally put my nose to the grindstone and figured out how to relate the packets in Wireshark to what I was getting in the app. What I realized after I did this - and you may have as well, if you looked hard at the pictures above - is that the fourth packet shows two of the same pattern: 0x80 followed by 0x00s, exactly what you see in the last packet that contains a proper header.

I also noticed the packets in Wireshark changed size if you added more players. This tells us something critical: the server is sending only as much joypad data as there are clients, so the client has to be reading a header value to find out how long the packet is supposed to be and how many bytes to take out of the TCP stream.

I visited this over and over and over. I probably single-stepped the code 40 times. Finally, I was staring at the code, and - I swear to god, you know that story of the dude who ran into a tree in the desert, the only tree in a 250-mile radius?

This is one of, literally, three or four comments in the entirety of netplay.cpp. I had no idea how to parse it earlier because I didn't know where the opcode sat in the packet, so I was just ignoring it. But when I came back to it I realized I had figured that out, so I stared at it a little longer, and suddenly saw the problem. You might already see it too.

joypad data count

is the top two bits

the top

TWO

BITS

TWO BITS

STORES A MAXIMUM

OF FOUR UNIQUE VALUES

MEANING AT FIVE PLAYERS IT OVERFLOWS AND WRAPS AROUND

They didn't allot enough room in the protocol format for more than four players worth of joypad data. Not because the packet can't hold it, but because the length field in the header was too short. And that's why, when i looked at the packet that was killing my client, it inexplicably had joypad data instead of a header - it was reading one joypad's worth of data out of the first packet, thinking it was done, and then looking for a new packet and grabbing the REST of the first packet instead.

Here's the kicker: This can't be practically fixed.

The only way to do it is to completely rewrite the protocol and work over the ENTIRE codebase, because joypad updates might be used in lots of places. Tons of regression testing, which I wasn't willing to do. I don't have the time or energy or C++ skill.

I could also have done something like extended the length of the server reset packet and packed in a client count but you know what's easier? I hardcoded both ends.

Server always sends 8 structs, client always decodes 8. who cares. done. christ almighty. works now. This means the packets are full of "useless data" if less than 8 players are playing, but in 2018 we don't need to save 27 bytes in each packet - It'll Be Fine.

I guess if you want a moral to this story: never assume the person before you did a reasonable job. There could be gaping holes in their logic. Nothing precludes it, so don't let yourself believe assumptions like "the protocol is well-defined and fundamentally works." In this case, the protocol was deeply flawed.

Contact me at articles@gekk.info - I would love to hear your input, stories, disagreements, etc.